Introduction

Technological advances in computer vision, mechatronics, artificial intelligence, and machine learning have enabled the development and implementation of remote sensing technologies for plant, weed, pest, and disease identification and management. They provide a unique opportunity for the development of intelligent agricultural systems for precision applications. This document discusses the concepts of artificial intelligence (AI) and machine learning and presents several examples to demonstrate the application of AI in agriculture.

Artificial Intelligence and Machine Learning

Artificial intelligence (AI) and machine learning (ML) are two promising areas in computer science, automation, and robotics. Machine learning is an application of artificial intelligence based on the idea that a machine (e.g., computer, microcontroller) can learn from data and identify patterns in them. That process can eliminate human intervention and errors. The process in which a computer can "learn" from data without being programmed and adjust to new inputs to accomplish specific tasks (e.g., self-driving cars) is described as machine learning. This process can require a vast amount of data (e.g., images) to "train" the AI technology or system. Machine learning and artificial intelligence can be applied and change modern agriculture (Ampatzidis, Bellis, and Luvisi 2017; Luvisi, Ampatzidis, and Bellis 2016). This publication presents several examples of AI-based agricultural technologies and applications.

Artificial Intelligence and Object Detection

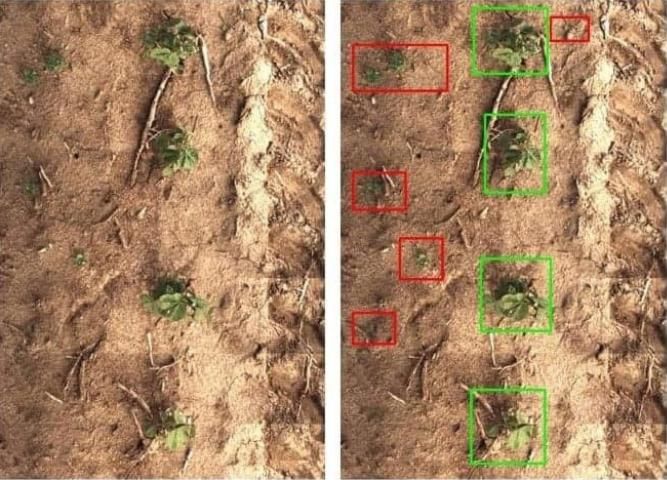

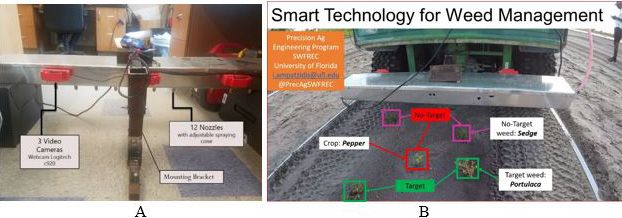

Image-based pattern recognition systems have been developed for several agricultural applications. One example is the smart (precision) sprayer developed by Blue River Technology (http://www.bluerivertechnology.com). The smart sprayer utilizes a vision-based system and artificial intelligence to detect and identify individual plants (such as cotton or wheat) and weeds, and spray only on the weeds (Figure 1). This can reduce the required quantity of herbicide by more than 90% compared to traditional broadcast sprayers. Traditional broadcast sprayers usually treat the entire field to control pest populations, potentially making applications to areas that do not require treatment. Applying agrochemicals only where pests occur could reduce costs, risk of crop damage, excess pesticide residue, and environmental impact. A similar smart technology for precision pest management has been developed through the Precision Agriculture Engineering program at the UF/IFAS Southwest Florida Research and Education Center (UF/IFAS SWFREC) for specialty crops (Figure 2). In the initial evaluation experiments, the sprayer was programmed to spray only on a specific weed (e.g., Portulaca weed, Figure 2B) and not on the bare ground, the crop, or any other weeds or plants (e.g., pepper plant and sedge weed, Figure 2B). A video demonstration of this smart technology is available at https://twitter.com/i/status/1045013127593644032.

Credit: Blue River Technology

Credit: UF/IFAS

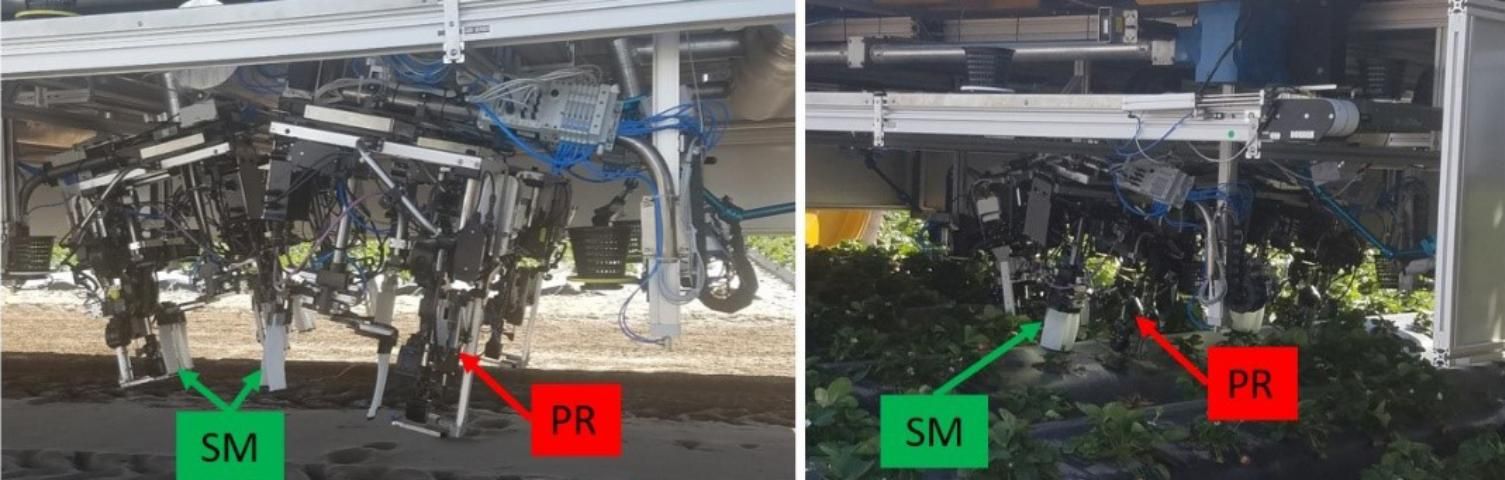

Another example is the robotic strawberry harvester developed by Harvest CROO Robotics (HCR, http://harvestcroorobotics.com). The strawberry harvester detects and locates ripe berries using machine vision and artificial intelligence. The HCR strawberry harvester includes an autonomous platform (Figure 3) to move through strawberry fields, several picking robots (Figure 4A), and support mechanisms (Figure 4B) to move leaf foliage (among other subsystems). Fresh-market strawberries are perishable and typically hand-harvested. Strawberry growers face labor shortages, which drive up harvest costs and increase the risk of incomplete harvest. The number of domestic farmworkers has decreased substantially, and growers are unable to fully harvest their marketable fruit (Guan and Wu 2018). The costs to recruit temporary foreign agricultural guest workers through the H-2A program are quite high (Roka, Simnitt, and Farnsworth 2017). Additionally, strawberry growers in both Florida and California face stiff competition from Mexican berry growers (Guan et al. 2015). Development of mechanical harvesting technologies and the use of AI could simultaneously reduce the growers' dependence on manual labor, decrease harvesting costs, and improve their overall competitiveness.

Credit: UF/IFAS

Credit: UF/IFAS

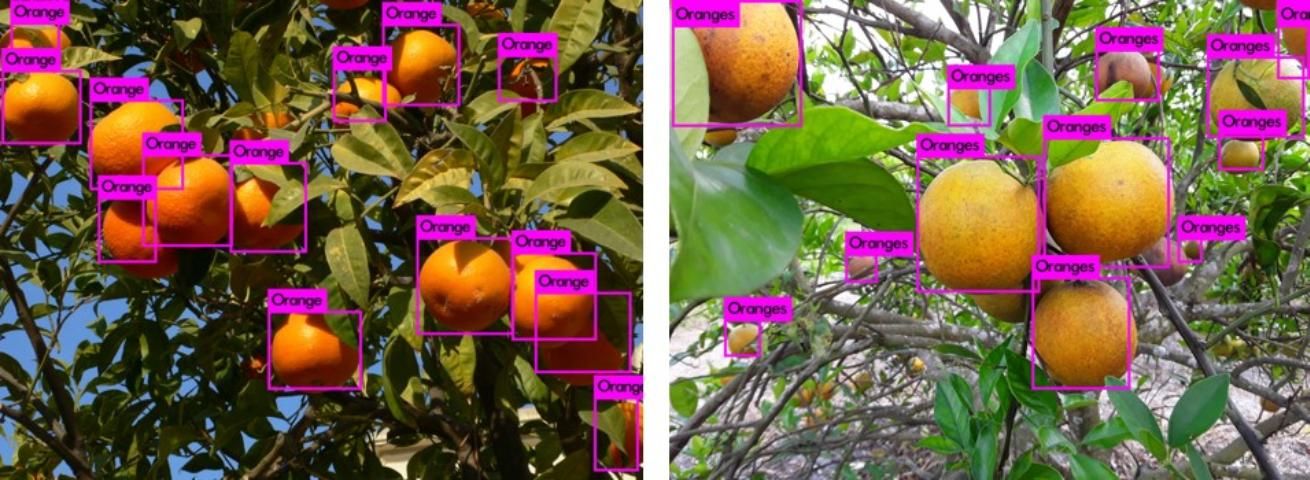

Our team at the Precision Agriculture Engineering program at UF/IFAS SWFREC is developing AI-based systems to detect, distinguish, and categorize several "objects" for Florida agricultural applications. We are developing vision- and AI-based systems to detect and count citrus trees (using aerial images, Figure 5), fruit (Figure 6), and flowers (citrus and vegetables), and to detect and distinguish weeds and pests. We have demonstrated that transfer learning (AI approach) can be leveraged when it is not possible to collect thousands of images to train the AI system (Ampatzidis et al. 2018a; Cruz et al. 2017). Transfer learning is the reuse of a trained neural network for a new problem.

Credit: UF/IFAS

Credit: UF/IFAS

Artificial Intelligence and Disease Detection

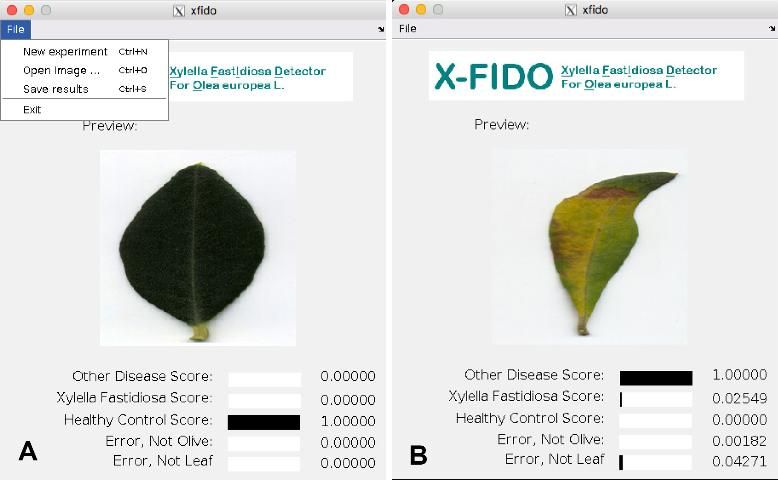

Vision-based pattern recognition and the utilization of deep learning (AI approach) systems to identify plants and detect diseases are not new concepts. Computer vision techniques to identify plant diseases were described as early as the 2000s. Now, machine vision and AI can be used to distinguish between a variety of diseases with similar symptoms and reduce diagnosis time and cost (Abdulridha et al. 2018). Cruz et al. (2017) developed a vision-based X-FIDO program (Figure 7) to detect symptoms of olive quick decline syndrome (OQDS) on leaves of Olea europaea L. infected by Xylella fastidiosa with a true positive rate of 98.60 ± 1.47%. This system utilizes a deep learning convolutional neural network (DP-CNN) and a novel abstraction-level data fusion algorithm to improve detection accuracy.

Credit: Cruz et al. (2017)

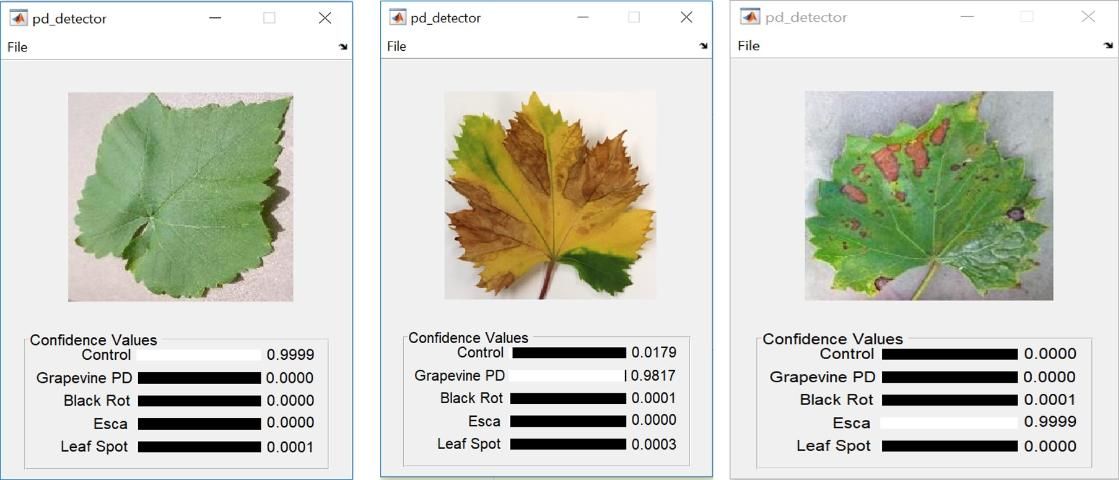

Ampatzidis et al. (2018a) and Ampatzidis and Cruz (2018) developed vision-based artificial intelligence disease detection systems (Figure 8) to identify grapevine Pierce's disease (PD) and grapevine yellows (GY), and distinguish them from other diseases (e.g., black rot, esca, leaf spot). PD and GY symptoms are easily confused with those of other diseases and conditions that can cause vine stress. Regarding the PD detection, the results are promising, with a 99.23 ± 0.64% accuracy, 98.08 ± 1.67% F1-score and 0.9761 ± 2.05 Matthew's correlation coefficient. Regarding the GY, the system obtains a 92.0% accuracy and a Matthew's correlation coefficient of 0.832. For reference, a baseline system with local binary patterns (LBP) and color histogram with a support vector machine (SVM) obtain only 26.7% and -0.124%, respectively. This technology has the potential to automate the detection of plant disease symptoms.

Credit: Cruz, El-Kereamy, and Ampatzidis (2018)

Artificial Intelligence and Pest Detection

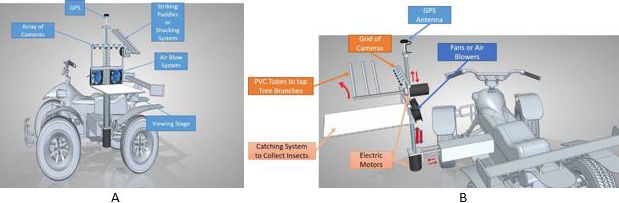

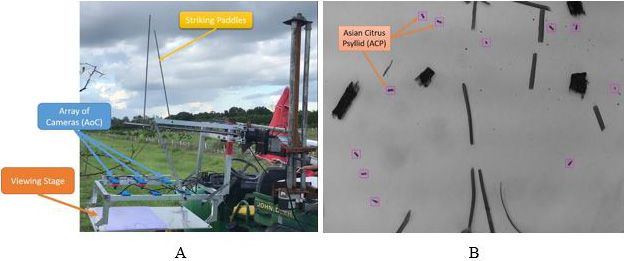

Growers face challenges from numerous pests. For example, the Asian citrus psyllid (ACP) is a key pest of citrus in Florida because it is a vector of citrus huanglongbing (HLB). These pests and diseases can cause serious yield loss and also reduce the ability of Florida growers to export or transport fresh fruit nationally and internationally. Growers and inspectors detect most of the pests in the field through visual inspection. However, visual detection is labor-intensive, expensive, and limited by the number of inspectors. The industry needs an automated method for detection of ACP to assist growers in making timely management decisions and to limit disease spread. Dr. Ampatzidis and Dr. Stansly are developing a vision-based automated system to detect, geo-locate, and count ACP in the field (Ampatzidis, Stansly, and Meirelles 2018b). This technology, mounted on a mobile vehicle (Figure 9), utilizes machine vision and a deep learning convolutional neural network (DP-CNN) to accurately detect and count ACP (Figure 10).

Credit: Ampatzidis, Stansly, and Meirelles (2018b)

Credit: Ampatzidis, Stansly, and Meirelles (2018b)

Conclusion

Developing an accurate vision-based artificial intelligence technology involves a learning (training) process that requires collection and photography of many samples in a natural and dynamic environment to accurately represent the conditions in which that device will operate. A deep learner's (AI technology) performance typically improves as the volume of high-quality data increases, enabling the system to overcome a variety of imaging issues, such as lighting conditions, poor alignment, and improper cropping of the object. These AI algorithms and technologies can be integrated with mobile hardware to provide a platform that has the potential to cost-effectively detect and geo-locate pests and diseases and generate a prescription map (compatible with precision equipment) for variable rate application of agrochemicals. Using these technologies, pesticide applicators will be better equipped to apply the right amount of pesticides only where they are needed, decrease pesticide use and expenses, and reduce potential environmental impact. These technologies can also be used for the development of accurate and cost-effective mechanical harvesting or pruning technologies for fruit and vegetables. More research is needed to develop low-cost and efficient AI-based systems for precision agricultural applications.

References

Abdulridha, J., Y. Ampatzidis, R. Ehsani, and A. de Castro. 2018. "Evaluating the performance of spectral features and multivariate analysis tools to detect laurel wilt disease and nutritional deficiency in avocado." Computers and Electronics in Agriculture 155: 203–2011.

Ampatzidis, Y., L. D. Bellis, and A. Luvisi. 2017. "iPathology: Robotic applications and management of plants and plant diseases." Sustainability 9(6): 1010. doi:10.3390/su9061010.

Ampatzidis, Y. and A. C. Cruz. 2018. "Plant disease detection utilizing artificial intelligence and remote sensing." In International Congress of Plant Pathology (ICPP) 2018: Plant Health in a Global Economy, July 29–August 3. Boston, MA.

Ampatzidis, Y., A. C. Cruz, R. Pierro, A. Materazzi, A. Panattoni, L. De Bellis, and A. Luvisi. 2018a. "Vision-based system for detecting grapevine yellow diseases using artificial intelligence." In XXX International Horticultural Congress, II International Symposium on Mechanization, Precision Horticulture, and Robotics, 12–16 August, 2018. Istanbul, Turkey.

Ampatzidis, Y., P. A. Stansly, and V. H. Meirelles. 2018b. "Automated systems and methods for monitoring and mapping insects in orchards." U.S. provisional patent application No. 62/696,089.

Blue River Technology. 2018. "Optimize Every Plant." Accessed December 14, 2018. http://www.bluerivertechnology.com

Cruz, A. C., A. El-Kereamy, and Y. Ampatzidis. 2018. "Vision-based grapevine Pierce's disease detection system using artificial intelligence." In ASABE Annual International Meeting, July 29–August 1. Detroit, MI.

Cruz, A. C., A. Luvisi, L. De Bellis, and Y. Ampatzidis. 2017. "X-FIDO: an effective application for detecting olive quick decline syndrome with novel deep learning methods." Frontiers, Plant Sci. https://doi.org/10.3389/fpls.2017.01741

Guan, Z. and F. Wu. 2018. Modeling the Choice between Foreign Guest Workers or Domestic Workers. Working Paper. Gainesville: University of Florida Institute of Food and Agricultural Sciences.

Guan, Z., F. Wu, F. M. Roka, and A. Whidden. 2015. "Agricultural labor and immigration reform." Choices 30(4): 1–9.

Harvest CROO Robotics. 2018. "Harvest CROO Robotics." Accessed December 14, 2018. http://harvestcroorobotics.com

Luvisi, A., Y. Ampatzidis, and L. D. Bellis. 2016. "Plant pathology and information technology: Opportunity and uncertainty in pest management." Sustainability 8(8): 831. doi: 10.3390/su8080831.

Roka, F. M., S. Simnitt, and D. Farnsworth. 2017. "Pre-employment costs associated with H-2A agricultural workers and the effects of the '60-minute rule.'" International Food and Agribusiness Management Review 20(3): 335–346.