This second publication in the Savvy Survey Series provides Extension faculty with additional information about using surveys in their everyday Extension programming. The publication suggests how surveys can be used in needs and assets assessments to inform program development, as formative and summative evaluations to support program improvement, and as customer service tools to capture satisfaction within programming efforts. This publication also introduces the concept of using logic models to guide questionnaire development, while also discussing general data types (demographics, factual information, attitudes and opinions, behaviors and events).

Surveys in the Extension World

Most Extension programs are designed to bring about changes in participant awareness, knowledge, attitudes, or aspirations, often with an end goal of producing subsequent changes in behavior and improved social, economic, or environmental conditions. Whether for accountability purposes or future program planning, Extension professionals need accurate, reliable methods for identifying client needs and/or capturing changes that occur due to activities in the program. Many methods exist for assessing needs and measuring change, including survey research.

Though survey research refers to "any measurement procedures that involve asking questions of respondents" (Trochim, 2006), it is far more than just questions on a piece of paper. Dillman, Smyth, and Christian (2014) developed a method for designing surveys that promotes several key features necessary for generating both quality and quantity in responses. Referred to as the Tailored-Design Methodology (TDM), this approach is based around three fundamental concepts: error reduction, survey procedure construction, and positive social exchange (Dillman et al., 2014). Each of these concepts can be applied in a variety of settings and is appropriate in each of the three situations often encountered by Extension professionals: need and asset assessments, formative and summative evaluations, and customer satisfaction evaluations.

Need and Asset Assessment

Extension professionals work with a variety of communities and associated clienteles within their local region. Each community has at its core both needs and assets that can potentially contribute to the success or failure of programming efforts. Furthermore, these communities are often dynamic, changing as the years pass by. It is crucial for agents to maintain an active and accurate understanding of the needs and assets that each community possesses. To be precise, needs are "the measurable gap between two conditions—'what is' (the current status or state) and 'what should be' (the desired status or state)" (Altschuld & Kumar, 2010). Assets, on the other hand, represent the strengths or protective factors that exist within a community (Mertens & Wilson, 2012). To better understand and gauge these community attributes, Extension professionals often turn to need and asset assessments (NAA).

Need and asset assessments are valuable tools that can be used to lay the foundation for a new program or to restructure an established one (Mertens & Wilson, 2012; Rossi, Lipsey, & Freeman, 2004). The NAA goes beyond a basic needs assessment by providing Extension faculty with not only insights into the needs of the target audience at a given point in time, but into the assets or strengths that the community has as well. NAA can also be used to assess whether an established program is adequately meeting the current needs of the target audience (Mertens & Wilson, 2012). However, NAA is more complex than simply asking people what they have and what they need (Altschuld & Kuma, 2010). Instead, it is a multi-step process that explores what is already known about the community as well as collecting new information about the target group. Surveys can be a useful tool in this multi-step process.

Surveys can be used to collect new information from members of a target audience regarding community needs and gaps in service, as well as community strengths and opportunities. Respondents may also be able to give insight into existing programs, providing a history of those efforts (Mertens & Wilson, 2012). Surveys may also be able to identify existing formal, informal, and potential leaders within the group (Mertens & Wilson, 2012). These types of efforts to understand the audience and gain local input will help to create momentum for project activities, while building credibility within the community (Mertens & Wilson, 2012). A recent evaluation of cooperative Extension educators serving Latino populations in the South emphasizes the value of performing a need and asset assessment within programming efforts (Herndon, Behnke, Navarro, Daniel, & Storm, 2013).

Formative and Summative Evaluation

In addition to performing need and asset assessments, Extension professionals can also use other processes to assess the health of their current programming efforts. One of the best ways to evaluate current efforts is through formative and summative evaluation. A formative evaluation, designed to guide program improvement, is conducted during the development or delivery of the program (Mertens & Wilson, 2012). An agent who is conducting a program over the course of a three-month period may choose to perform several small-scale formative evaluations along the way to make sure that the program is moving along according to plan. Using a questionnaire that has been designed to complement the overall evaluation plan, the agent can seek timely feedback from program participants that can direct the agent on how to adjust the program for greatest impact.

Summative evaluations, on the other hand, are performed near the end or upon completion of a program in order to provide an overall assessment of the program's effectiveness (Mertens & Wilson, 2012; Rossi et al., 2004). Extension faculty may elect to provide program participants with a summative evaluation at the immediate conclusion of the program, or they may opt to delay collection, asking participants to respond after a designated period of time. This type of feedback provides valuable information about the overall success of the program, which is often necessary for accountability and reporting purposes throughout the year. See Davis, Burggraf-Torppa, Archer, and Thomas (2007) for an example of a program that incorporates both formative and summative evaluation.

Customer Satisfaction

The world of the Extension professional is not limited to programs alone. There are many times that an agent might receive a phone call or office visit from a client seeking information. These interactions with the community can be just as valuable as those created through programs and should also be assessed. One common way to capture client perceptions regarding these types of interactions is through client experience or customer satisfaction surveys. Such surveys can be used to identify perceptions about the quality of services provided by Extension, while also uncovering attributes such as program parity (how well the program represents the demographics of the surrounding county). One example of a customer satisfaction survey that explores the influence that type of contact has with client satisfaction is presented in the work of Galindo-Gonzalez and Israel (2010).

Florida Cooperative Extension agents statewide are also asked to collect contact data from customers (phone calls, office visits, and program participants) over a one-month period each year. Annually, a rotating sample of 13 or 14 counties is selected to take part in the statewide Florida Cooperative Extension Service's customer satisfaction survey. However, this process can also be used at the county level to better understand perceptions of local clientele. For a more detailed explanation on how the customer satisfaction survey is conducted for Florida Extension, see Israel (2022).

Defining the Question

Once the purpose of the survey has been determined (need and asset assessment, formative or summative evaluation, or customer satisfaction survey), it is time to begin designing the questionnaire. To create an instrument that will provide useful information, it is important to pay close attention to a variety of design details. A number of resources provide in-depth information on developing data collection instruments (Dillman, Smyth, & Christian, 2014; de Leeuw, Hox, & Dillman, 2008); however, one great starting place for developing a questionnaire for a program is the program's logic model.

The Logic Model

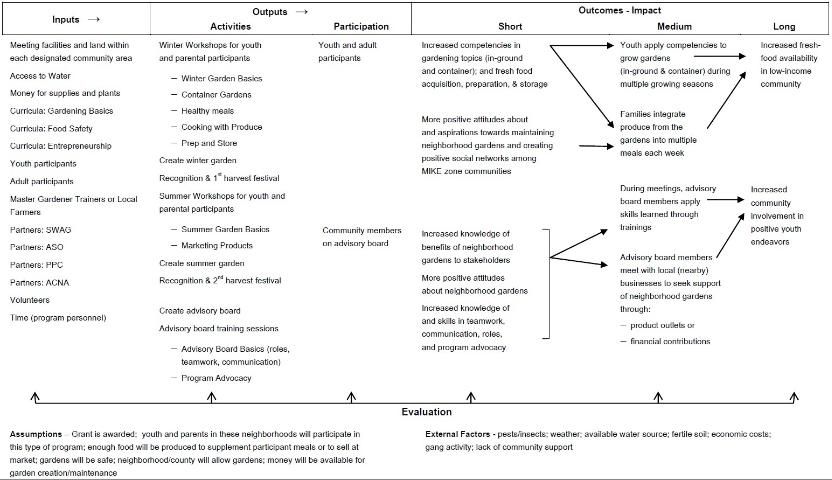

Program planners and evaluators throughout Extension often recommend the creation of a logic model during the beginning stages of program development (Israel, 2001; 2010). A logic model is a useful tool because a well-constructed model provides users with a visual representation of the program's developmental logic (Seevers & Graham, 2012). Because the logic model involves identifying the inputs, outputs, and intended outcomes for a prescribed plan of action, the logic model has the potential to serve as the guiding force behind the types of questions that would be of greatest interest in a variety of survey settings. This is especially true when the elements of the program's context, major activities, sequence of outcomes, and causal connections between the parts are clearly specified. University of Wisconsin-Extension (2013) provides detailed information on developing logic models.

To better understand how a questionnaire might be constructed, consider the following example:

Over the past decade, a group of neighborhood communities in one Florida county has been identified by the local Sherriff's Department as having the highest crime levels for that county. Youth in these neighborhoods are faced with persistent poverty and gang activity. Extension faculty have developed a neighborhood gardens project to provide positive development opportunities for youth, as well as a source of supplemental high-nutrient foods for low-income families in the impacted areas. The program will increase youth and adult knowledge and skills to grow and market the produce from the gardens to nearby community outlets and expand the opportunity to build positive social networks within and between these communities. The logic model for this program can be found in Figure 1.

Credit: J. L. O'Leary

This detailed logic model provides the coordinator with several potential directions for developing a questionnaire. Consider the following potential examples for each of the survey scenarios:

- A need and asset assessment sent to neighborhood residents in the impacted communities designed to capture details about the nutritional and market-based needs as well as current assets of the communities (it may also reveal other more pressing problems or potential partner organizations).

- A formative evaluation questionnaire that asks program participants to assess their current knowledge and skills based on what they have learned after the first two sessions.

- A summative evaluation questionnaire that asks program participants to assess how their marketing strategies have changed six months after completing the workshops.

- A customer satisfaction questionnaire asks whether the inputs (staff, volunteers, materials, equipment, facilities) and outputs (delivery of service, topics covered, resources generated) met the expectations of the clientele.

General Data Types

Once the direction of the questionnaire is clear, it is time to begin thinking about the type of questions that may be asked. In general, there are three data types that are captured in questionnaires: demographic and factual information; attitudes and opinions; and behaviors and events. Different data types require different question types to generate the most accurate answers; when developing the questionnaire, consider which types of questions will generate accurate data.

The following sections provide a brief introduction into the types of questions that may be asked in a questionnaire. There are a number of ways to phrase questions for different types of data: a more thorough discussion of questionnaire construction is provided in other Savvy Survey Series publications.

Demographic and factual information. Demographics are included to provide information about the characteristics of the individuals who were included in the surveyed group. Demographic information is also useful for identifying market segments that have specific needs and assets. Demographic information is a type of factual data that respondents can easily recall and provide on the survey and is thus easier to accurately capture than other types. Some common demographic items include:

- Age

- Gender

- Race

- Ethnicity

- Education level

- Marital status

- Employment status

- Occupation

- Socioeconomic status

- Language usually spoken at home

- Household income level (monthly, annual)

- Number in household (and/or number under certain age)

- Region/Location of residence

- Type of residence (apartment, home, etc.)

- Home ownership status (own, rent)

Additionally, a questionnaire may include items that ask for other factual information. For example, homeowners may be asked what brand of fertilizer they spread onto their lawns each year or whether they have installed an in-ground irrigation system.

Attitudes and opinions. Questionnaires will also frequently ask people about what they think or feel about a particular topic. These items can often be based on topics that respondents may have spent little time thinking about and, thus, will require more time and effort to construct a response for. In this item type, different elements of the question can influence the type of answer generated; therefore, it is important to consider visual layout, word choices, and response options carefully. To clarify how these items may be used, consider the following examples for the neighborhood gardens scenarios:

- Assess attitudes towards the concept of neighborhood gardens.

- Assess attitudes of local residents towards the idea of increasing daily vegetable and fruit intake.

- Assess opinions of local residents regarding preferred vegetables or plants to be grown in the neighborhood garden.

Behaviors and events. Finally, questionnaires can also be used to ask people about their skills, behaviors, or events that occurred over a period of time. Often to an even greater extent than with questions about attitudes and opinions, questions about behaviors and events require people to spend time and effort to construct a response. People's memory for behavioral or event-based scenarios is often distorted, especially with the passage of time.

Fortunately, however, questionnaires can be constructed in such a way as to aid the respondent with the recall process. The design of the items and questionnaire layout are particularly important, here. Be mindful of visual layout, word choices, and response options to avoid influencing the answer generated. Consider the following examples for the neighborhood gardens scenarios:

- Ask youth which gardening skills they have used most often to tend to their portion of the neighborhood gardens.

- Ask families involved in the project what types of nutritional behavior changes they have made since beginning the program.

- Ask local residents whether they have noticed an increased availability of nutritional products in nearby stores since the start of the program.

In Summary

This publication in the Savvy Survey Series introduces information about using survey questionnaires in everyday Extension programming. Surveys can be used in need and asset assessments to inform program development, as formative and summative evaluations to support program improvement, and as customer service tools to capture satisfaction within programming efforts. This publication also introduces the concept of using logic models to guide questionnaire development, while also discussing general data types (demographics, factual information, attitudes and opinions, and behaviors and events).

References

Altschuld, J. W., & D. D. Kumar. (2010). Needs assessment. Thousand Oaks, CA: SAGE Publications.

Davis, G. A., C. Burggraf-Torppa, T. M. Archer, & J. R. Thomas. (2007). Applied research initiative: Training in the scholarship of engagement. Journal of Extension, 45(2). Retrieved from https://archives.joe.org/joe/2007april/a2.php

de Leeuw, E. D., J. J. Hox, & D. A. Dillman, Eds. (2008). International handbook of survey methodology. New York, NY: Lawrence Erlbaum Associates.

Dillman, D. A., J. D. Smyth, & L. M. Christian. (2014). Internet, mail, phone, and mixed-mode surveys: The tailored design method (4th ed.). Hoboken, NJ: John Wiley and Sons.

Galindo-Gonzalez, S., & G. D. Israel. (2010). The influence of type of contact with Extension on client satisfaction. Journal of Extension, 48(1). Retrieved from https://archives.joe.org/joe/2010february/a4.php

Herndon, M. C., A. O. Behnke, M. Navarro, J. B. Daniel, & J. Storm. (2013). Needs and perceptions of Cooperative Extension educators serving Latino populations in the South. Journal of Extension, 51(1). Retrieved from https://archives.joe.org/joe/2013february/a7.php

Israel, G. D. (2001). Using Logic Models for Program Development. AEC360. Gainesville: University of Florida Institute of Food and Agricultural Sciences. Retrieved from https://edis.ifas.ufl.edu/publication/WC041

Israel, G. D. (2010). Logic model basics. Gainesville: University of Florida Institute of Food and Agricultural Sciences. Retrieved from https://edis.ifas.ufl.edu/publication/WC106

Israel, G. D. (2022). Florida Cooperative Extension Service's Customer Satisfaction Survey Protocol. Retrieved from https://pdec.ifas.ufl.edu/satisfaction/Client%20Experience%20Survey%20Protocol%20-%202022.pdf

Mertens, D. M., & A. T. Wilson. (2012). Program evaluation theory and practice: A comprehensive guide. New York: Guilford Press.

Rossi, P. H., M. W. Lipsey, & H. E. Freeman. (2004). Evaluation: A systematic approach (7th ed.). Thousand Oaks, CA: SAGE Publications.

Seevers, B., & D. Graham. (2012). Education through Cooperative Extension (3rd ed.). Fayetteville, AR: University of Arkansas.

Trochim, W. M. K. (2006). Survey research. In Research Methods Knowledge Base. Retrieved from https://conjointly.com/kb/survey-research/

University of Wisconsin-Extension. (2013). Logic model. Retrieved from https://fyi.extension.wisc.edu/programdevelopment/logic-models/