Importance-performance analysis can reveal whether Extension clients are satisfied with the elements of programs they consider most important. This document was developed for Extension professionals and it may also be useful to a broad range of practitioners who plan and evaluate programs. This is the third EDIS document in a series of three on using importance-performance analysis to prioritize Extension resources, and it covers gap analysis as a means to understand a client's perceptions and rank Extension priorities. Gap analysis is one of two ways to analyze IPA data. The other articles in this series can be found at https://edis.ifas.ufl.edu/collections/series_importance-performance_analysis.

Overview

Importance-performance analysis, or IPA, is used to gauge how people feel about the quality of service they have received and certain characteristics of a place, issue, or program (Martilla & James, 1977; Sinischalchi et al., 2008). Extension professionals can use IPA to make decisions and prioritize resources by identifying the level of importance and satisfaction clients associate with specific attributes of a program or facility. It is important to identify attributes with the highest mean importance scores and the lowest mean satisfaction score, but separate consideration of mean importance or satisfaction scores is not sufficient to make resource allocation or communication decisions (Levenburg & Magal, 2005). To make informed decisions regarding resource allocation or the design of communication messages pertaining to specific issues, we should consider a joint analysis of both importance and satisfaction scores by assessing the gaps between these measures.

As described in the first publication in this series (https://edis.ifas.ufl.edu/wc250), importance is defined as the perceived value or significance felt by a clientele for an attribute of interest (Sinischalchi et al., 2008). Performance is defined as the judgement made by a clientele about the extent to which that attribute of interest is successful (Levenburg & Magal, 2005). Operationally, satisfaction with an attribute of interest is used to define performance.

Collecting IPA Data

IPA data may be collected in numerous ways. The goal is to obtain a numerical measure of both satisfaction (i.e. performance) and importance for each attribute of interest.

- First, it is necessary to identify the attributes being measured. These attributes may be related to programmatic goals, site characteristics, or management features.

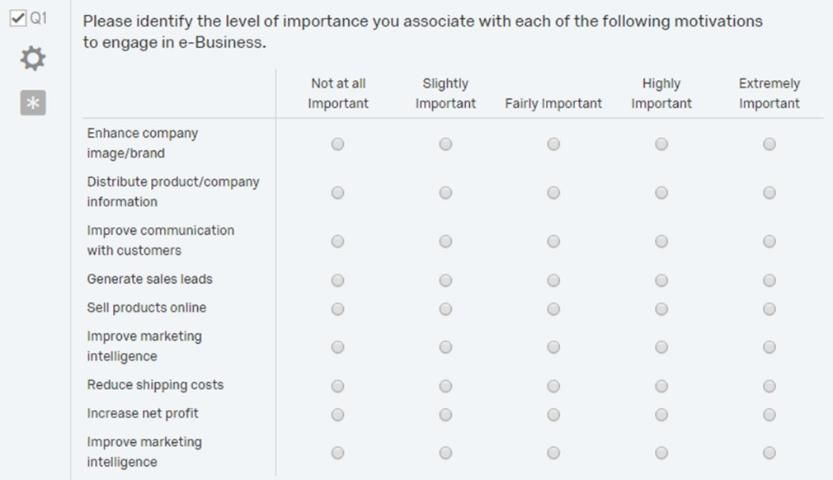

- Next, develop a questionnaire, with Likert or Likert-type scales corresponding to importance of and satisfaction with each attribute (see Figure 1). Likert scales are ordered, five-to-nine-point response scales commonly used to obtain respondents' preferences or agreement/disagreement with a particular statement or a group of statements (Bertram, 2006).

Credit: Adapted from "Applying importance-performance analysis to evaluate e-business strategies among small firms" by N. M. Levenburg and S. R. Magal, 2005, Journal of Marketing, 47(10), p. 38.

Often, single items are used for attributes. However, data may be more accurate when you create an index consisting of several items. The following is an example of different indexes and the individual items forming those indexes (see Table 1).

3. During questionnaire development, test the questionnaire for its validity and reliability. For further details about establishing validity and reliability of questionnaires, please read The Savvy Survey #8: Pilot Testing and Pretesting Questionnaires, https://edis.ifas.ufl.edu/pd072, and The Savvy Survey #6d: Constructing Indices for a Questionnaire, https://edis.ifas.ufl.edu/pd069.

4. During pilot testing, you may find that changes need to be made to the questionnaire to establish validity and reliability. After all changes are made, the instrument will be ready for data collection.

5. To collect data, distribute the questionnaire to target audience members along with clear instructions for each respondent to assign an importance and satisfaction score to each identified attribute in the questionnaire (see Figure 1).

Calculating IPA Gaps

Extension professionals may choose to use either gap analysis or draw visual maps, but most Extension professionals try to use both. Gap analysis can be used to quantify the difference between a client's satisfaction with and their perceived importance of specific attributes. To conduct gap analysis, first, the mean importance and mean satisfaction scores are calculated for the identified individual attributes or an index of attributes. Next, the mean importance score for each attribute is subtracted from the respective mean satisfaction score. The resulting difference is the gap in satisfaction for the identified attribute.

A negative gap value indicates dissatisfaction with an important attribute. The larger the negative gap value the greater the gap. To prioritize resources, rank order the gaps from most negative to most positive (see Table 2).

Mean importance and satisfaction scores, gaps, and paired t-test p values for small firms' motivations to engage in e-business.

A paired t-test can be conducted on the importance and satisfaction means to determine whether there is a significant difference between the means or whether this difference is due to random chance (see Table 2). Based on the results of the paired t-test, we can decide which satisfaction-importance gaps are significant and thus should be considered for our programming needs (Levenburg & Magal, 2005; Martilla & James, 1977). For further details about conducting t-tests, please review The Savvy Survey #16: Data Analysis and Survey Results, https://edis.ifas.ufl.edu/pd080.

Interpreting IPA Gaps

The issues or attributes with the greatest negative gaps should be considered the most urgent and the most in need of time and resources. Based on the data presented in Table 2, the largest negative gap corresponds to marketing, so time and efforts should be concentrated on improving marketing strategies of small e-firms. Remember that gaps can be positive or negative, and positive gaps indicate that the audience's satisfaction with a certain attribute is higher than the corresponding importance they associate with that attribute, while a negative gap indicates that satisfaction with a certain attribute is less than its importance (Levenburg & Magal, 2005). Therefore, Extension professionals are advised to focus on the items with the largest negative gap that is statistically significant because these items are not performing well but are very important to Extension clients.

Conclusion

Gap analysis using IPA is an innovative and useful approach for Extension educators to conduct needs assessments and to evaluate programs. With needs assessments conducted via IPA gaps analysis, Extension educators can prioritize which issues should be emphasized (highest significant negative gaps) and which issues should be de-emphasized (positive or non-significant gaps). Gap analysis can be conducted for any issue or program that needs to be addressed or evaluated by Extension educators. By using IPA gap analysis, Extension professionals can develop increasingly impactful programs and efficiently use their available resources. Extension educators are encouraged to use this approach in their programming efforts to better serve target audiences by addressing their most immediate needs.

Acknowledgements

The authors thank Randy Cantrell, Katie Stofer, and Yilin Zhuang for their helpful input on an earlier draft of this document.

References

Bertram, D. (2006). "Likert scales." (No longer available online.)

Levenburg, N. M., & Magal, S. R. (2005). Applying importance-performance analysis to evaluate e-business strategies among small firms. e-Service Journal, 3(3), 29–48. https://doi.org/10.2979/esj.2004.3.3.29

Martilla, J. A., & James, J. C. (1977). Importance-performance analysis. Journal of Marketing, 10(1), 13–22. https://doi.org/10.2307/1250495

Siniscalchi, J. M., Beale, E. K., & Fortuna, A. (2008). Using importance-performance analysis to evaluate training. Performance Improvement, 47(10), 30–35. https://doi.org/10.1002/pfi.20037